Redis Cache Invalidation Strategies Explained with C# Examples and Best Practices

What Is Cache Invalidation?

Cache invalidation is the process of removing or updating outdated data stored in Redis when the original data source changes. Since Redis is usually used as a high-speed cache layer in front of databases, stale cache data can cause users to see incorrect or outdated information. A good invalidation strategy ensures that cached data remains reasonably fresh while still improving performance and reducing database load.

Cache invalidation is often considered one of the hardest problems in distributed systems because applications must balance consistency, performance, scalability, and simplicity. Different systems require different approaches depending on how critical data accuracy is and how frequently the data changes.

Why Cache Invalidation Matters?

Without proper invalidation, Redis may continue serving stale values long after the database has changed. For example, if a product price changes in the database but the Redis cache still contains the old value, users may see incorrect pricing information. This can lead to inconsistent user experiences and business problems.

On the other hand, invalidating cache too aggressively reduces the performance benefits of Redis because the application repeatedly fetches data from the database. The goal is to invalidate cache intelligently so that systems remain both fast and reasonably consistent.

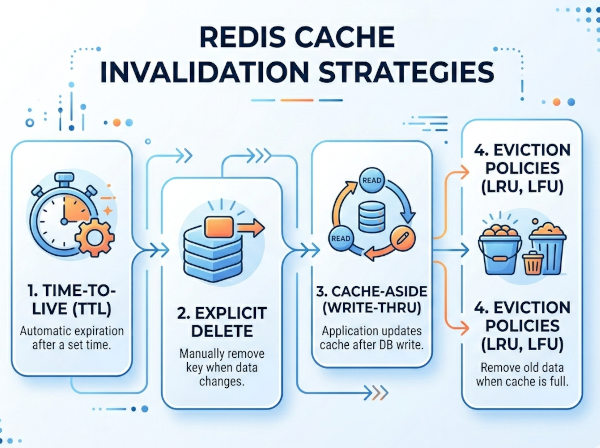

Common Redis Cache Invalidation Strategies

1. Time-Based Expiration (TTL)

Time-based expiration is the simplest and most commonly used invalidation strategy. Each cache entry receives a TTL (Time To Live), and Redis automatically removes the key after the expiration time passes.

This approach works well for data that changes periodically but does not require immediate consistency. For example, product catalogs, news feeds, or analytics dashboards can tolerate a few minutes of stale data. TTL-based invalidation is easy to implement and reduces operational complexity because Redis handles expiration automatically.

However, the main disadvantage is that stale data may remain in cache until the TTL expires. If data changes immediately after caching, users may still see outdated information for the remainder of the expiration window.

Example: TTL Cache in C#

using StackExchange.Redis;

using System;

var redis = ConnectionMultiplexer

.Connect("localhost");

var db = redis.GetDatabase();

string cacheKey = "product:1001";

db.StringSet(

cacheKey,

"Gaming Laptop",

TimeSpan.FromMinutes(10));

Console.WriteLine("Cached with TTL");

In this example, Redis automatically removes the cache entry after 10 minutes. No manual invalidation is required.

2. Cache-Aside Pattern (Lazy Loading)

The cache-aside pattern is one of the most widely used Redis caching strategies. In this model, the application first checks Redis for data. If the data does not exist, the application retrieves it from the database, stores it in Redis, and then returns it to the user.

When data changes, the application explicitly deletes the corresponding Redis key. The next request reloads fresh data from the database into cache.

This strategy is highly efficient because only requested data is cached. It also avoids filling Redis with unnecessary entries. However, developers must ensure that cache deletion happens correctly whenever the database changes.

Cache-Aside Flow

• User requests product data.

• Application checks Redis.

• Cache miss occurs.

• Data loaded from database.

• Data stored in Redis.

• Future requests use Redis cache.

• Database update triggers cache deletion.

Example: Cache-Aside in C#

using StackExchange.Redis;

using System;

public class ProductService

{

private readonly IDatabase _cache;

public ProductService()

{

var redis = ConnectionMultiplexer

.Connect("localhost");

_cache = redis.GetDatabase();

}

public string GetProduct(int productId)

{

string cacheKey = $"product:{productId}";

var cachedProduct = _cache.StringGet(cacheKey);

if (cachedProduct.HasValue)

{

Console.WriteLine("Loaded from cache");

return cachedProduct;

}

Console.WriteLine("Loaded from database");

string product = "Gaming Laptop";

_cache.StringSet(

cacheKey,

product,

TimeSpan.FromMinutes(30));

return product;

}

public void UpdateProduct(int productId)

{

Console.WriteLine("Database updated");

string cacheKey = $"product:{productId}";

_cache.KeyDelete(cacheKey);

}

}

This strategy ensures that outdated cache entries are removed immediately after database updates.

3. Write-Through Cache

In the write-through pattern, the application writes data to Redis and the database simultaneously. Redis always contains the latest value because updates immediately refresh the cache.

This approach provides strong cache consistency and simplifies reads because cache entries are almost always valid. However, write operations become slower because every update must go through both systems.

Write-through caching is useful for systems where read performance is critical and stale data is unacceptable, such as user session management or financial account summaries.

Example Flow

• User updates profile.

• Application writes to database.

• Application updates Redis cache immediately.

• Future reads return fresh cache data.

Example: Write-Through in C#

using StackExchange.Redis;

using System;

public class UserService

{

private readonly IDatabase _cache;

public UserService()

{

var redis = ConnectionMultiplexer

.Connect("localhost");

_cache = redis.GetDatabase();

}

public void UpdateUser(int userId, string name)

{

Console.WriteLine("Saving to database");

string cacheKey = $"user:{userId}";

_cache.StringSet(

cacheKey,

name,

TimeSpan.FromHours(1));

Console.WriteLine("Cache updated");

}

}

4. Write-Behind (Write-Back) Cache

In write-behind caching, the application writes changes to Redis first and updates the database asynchronously later. This improves write performance because Redis operations are extremely fast.

This strategy is commonly used in high-throughput systems such as analytics pipelines, telemetry collection, or gaming leaderboards. However, there is a risk of data loss if Redis crashes before pending updates are written to the database.

Write-behind caching trades consistency for performance and scalability.

5. Event-Driven Cache Invalidation

In distributed systems and microservices, applications often use event-driven invalidation. When data changes, a service publishes an event such as ProductUpdated or UserDeleted. Other services listening for these events invalidate related Redis keys.

This approach is highly scalable because services remain loosely coupled. Instead of directly coordinating invalidation logic across systems, events distribute changes asynchronously.

Event-driven invalidation works especially well in systems using Kafka, RabbitMQ, or Azure Service Bus.

Example Event Flow

• Product service updates database.

• ProductUpdated event published.

• Inventory service invalidates Redis cache.

• Recommendation service refreshes product data.

• Search index updates asynchronously.

6. Tag-Based Invalidation

Tag-based invalidation groups related cache entries under logical tags. Instead of deleting keys individually, applications invalidate all cache entries associated with a specific tag.

For example, if a category changes, all products within that category can be invalidated together. This is useful in content-heavy systems such as CMS platforms or e-commerce applications.

Redis does not support tags natively, so developers typically implement this manually using Redis Sets.

Example Tag Structure

category:electronics

-> product:1001

-> product:1002

-> product:1003

When the electronics category changes, the application deletes all associated product cache keys.

7. Version-Based Invalidation

Version-based invalidation avoids deleting keys directly. Instead, cache keys include a version number. When data changes, the version increments, and the application automatically starts using new keys.

Old cache entries eventually expire naturally through TTL policies. This approach is useful in highly concurrent systems because it avoids race conditions caused by deleting keys while requests are still processing.

Example Cache Keys

product:1001:v1

product:1001:v2

product:1001:v3

After updating the product, the application switches to the next version key.

8. Pub/Sub-Based Invalidation

Redis Pub/Sub allows applications to broadcast invalidation messages across multiple application instances. When one instance updates data, it publishes a message that other instances receive and use to invalidate local caches.

This strategy is useful in horizontally scaled environments where multiple servers maintain independent in-memory caches alongside Redis.

Example Pub/Sub Flow

• Application updates product.

• Redis publishes invalidation message.

• All application nodes receive notification.

• Local caches are cleared.

Best Strategy for Different Use Cases

E-Commerce Platforms

E-commerce systems commonly combine cache-aside with TTL expiration. Product information is cached lazily, while updates explicitly invalidate affected keys. TTL acts as a safety mechanism in case invalidation events fail.

This combination balances performance and consistency while keeping implementation relatively simple.

Real-Time Financial Systems

Financial systems typically require write-through caching or event-driven invalidation because stale balances or transactions can create serious business problems. Immediate consistency is more important than raw performance.

These systems also frequently combine Redis with distributed locking and transaction management.

Analytics and Telemetry Systems

Analytics platforms often use write-behind caching because extremely high write throughput matters more than immediate consistency. Events and metrics are buffered in Redis and flushed to databases asynchronously.

This dramatically reduces database pressure during traffic spikes.

CMS and Content Platforms

Content management systems frequently use tag-based invalidation because large groups of related content may change together. Invalidating entire categories or sections becomes easier than deleting individual cache keys manually.

Common Redis Cache Invalidation Problems

Cache Stampede

A cache stampede occurs when many requests simultaneously try to rebuild expired cache entries. This can overload the database because all requests bypass Redis at the same time.

Developers often solve this using distributed locks, request coalescing, or staggered TTL expiration.

Stale Data

Stale data appears when invalidation fails or propagation delays occur. Event-driven systems especially must handle retry logic carefully to ensure invalidation events are not lost.

Race Conditions

Race conditions happen when multiple updates and invalidations occur concurrently. One request may delete cache while another immediately repopulates it using outdated data.

Version-based invalidation and distributed locking can reduce these issues.

Redis Cache Invalidation Best Practices

Always Use TTL as a Safety Net

Even if applications use explicit invalidation, TTL expiration should still exist as a fallback mechanism. This prevents stale entries from remaining forever if invalidation logic fails unexpectedly.

Use Consistent Cache Key Naming

Structured cache keys improve maintainability and simplify invalidation logic.

Example:

user:1001

product:2005

order:5001

Monitor Cache Hit Ratios

A low cache hit ratio may indicate overly aggressive invalidation or poor cache design. Monitoring helps optimize TTL durations and invalidation frequency.

Avoid Caching Highly Volatile Data

Data that changes constantly may create excessive invalidation overhead. In some cases, directly querying the database is more efficient than repeatedly updating cache entries.

Comparison of Most Used Strategies

| Strategy | Consistency | Performance | Complexity | Best Use Case |

|---|---|---|---|---|

| TTL Expiration | Medium | High | Low | General-purpose caching |

| Cache-Aside | High | High | Medium | E-commerce platforms |

| Write-Through | Very High | Medium | Medium | Financial systems |

| Write-Behind | Low | Very High | High | Analytics systems |

| Event-Driven | High | High | High | Microservices |