Load Balancer Types Explained: Layer 4, Layer 7, Hardware, Software, and Cloud Load Balancers

A load balancer is a system that distributes incoming traffic across multiple servers to improve scalability, availability, and reliability. Instead of sending all traffic to a single machine, the load balancer spreads requests intelligently so no individual server becomes overloaded.

Without load balancing, a single high-traffic server can quickly become a bottleneck or single point of failure. Modern distributed applications rely heavily on load balancers to maintain uptime during traffic spikes, infrastructure failures, and deployments.

A simplified traffic flow looks like this:

Clients → LoadBalancer → Servers

Why Do We Need Load Balancers?

Load balancers solve several major infrastructure problems simultaneously.

They improve scalability by allowing applications to run across multiple backend servers instead of relying on a single machine. As traffic grows, additional servers can be added behind the load balancer without changing the public endpoint users access.

They also improve reliability. If one server crashes, the load balancer automatically redirects traffic to healthy servers. This fault tolerance is essential for production systems where downtime directly affects users and revenue.

Another important advantage is deployment flexibility. Teams can perform rolling deployments, maintenance, and infrastructure upgrades gradually without taking the application offline.

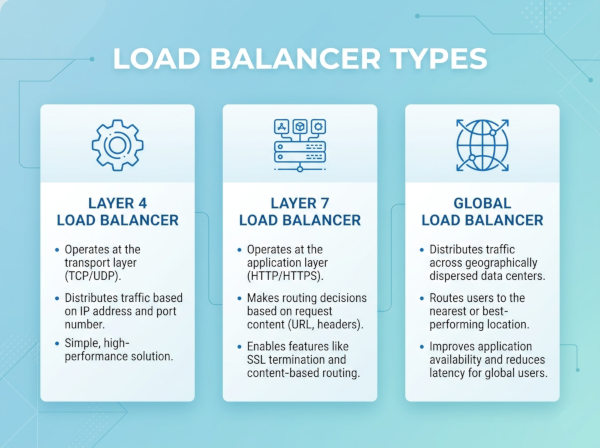

Main Types of Load Balancers

1. Layer 4 Load Balancer

A Layer 4 load balancer operates at the transport layer of the OSI model using TCP or UDP information. It routes traffic based on IP addresses and ports without inspecting the actual application content.

The decision process is conceptually similar to:

IP + Port → TargetServer

Layer 4 balancing is extremely fast because it does not analyze HTTP headers, cookies, or URLs. It simply forwards packets efficiently between clients and backend servers.

Common examples include:

• HAProxy

• Nginx

• Amazon Web Services Network Load Balancer

Advantages of Layer 4 Load Balancing

Layer 4 balancing provides very high performance and low latency because packet inspection is minimal. It works especially well for raw TCP services such as gaming servers, databases, messaging systems, and VoIP applications.

It also scales efficiently under extremely high traffic loads because the balancing logic remains lightweight.

Disadvantages of Layer 4 Load Balancing

Since Layer 4 balancers do not inspect application-level data, they cannot make routing decisions based on URLs, cookies, HTTP headers, or user sessions.

This limitation reduces flexibility for complex web applications requiring intelligent request routing.

2. Layer 7 Load Balancer

A Layer 7 load balancer operates at the application layer and understands protocols such as HTTP and HTTPS. Unlike Layer 4 systems, it can inspect request contents before deciding where traffic should go.

Routing decisions may depend on:

• URL path

• HTTP headers

• Cookies

• Query parameters

• User authentication

• Geographic location

For example:

URL → Service

A request to:

/api/users

may route to one service, while:

/images

routes to another infrastructure cluster.

Advantages of Layer 7 Load Balancing

Layer 7 balancing provides advanced routing flexibility for modern microservices and API-driven systems. It supports SSL termination, caching, authentication integration, request rewriting, and content-aware routing.

This makes it ideal for large-scale web platforms and cloud-native applications.

Disadvantages of Layer 7 Load Balancing

Application-level inspection introduces additional CPU overhead and latency compared to Layer 4 balancing.

Configuration complexity also increases significantly because routing rules become more sophisticated.

3. Hardware Load Balancer

Hardware load balancers are dedicated physical appliances designed specifically for traffic management and high-performance networking.

Examples include:

• F5 BIG-IP

• Citrix ADC

These devices are commonly used in large enterprise data centers requiring extremely high throughput and advanced networking features.

Advantages of Hardware Load Balancers

Hardware appliances deliver excellent performance, specialized acceleration, and enterprise-grade reliability. They often include advanced security features, traffic inspection, DDoS protection, and SSL acceleration.

They are optimized specifically for networking workloads.

Disadvantages of Hardware Load Balancers

Hardware solutions are expensive and less flexible than software-based alternatives. Scaling usually requires purchasing additional physical devices, which increases operational complexity and infrastructure cost.

They are also less compatible with dynamic cloud-native environments.

4. Software Load Balancer

Software load balancers run as applications or services on standard servers or containers instead of dedicated hardware.

Popular examples include:

• Nginx

• HAProxy

• Envoy

Software balancers are extremely common in Kubernetes and microservice environments.

Advantages of Software Load Balancers

They are cost-effective, flexible, and easy to automate. Modern DevOps workflows integrate naturally with software-based load balancing because configuration changes can be deployed programmatically.

They also support containerized infrastructure and dynamic scaling effectively.

Disadvantages of Software Load Balancers

Because they run on shared infrastructure, they may consume compute resources needed by applications themselves.

Performance can also vary depending on server capacity and operating system tuning.

5. Cloud Load Balancer

Cloud providers offer fully managed load balancing services integrated directly into their infrastructure platforms.

Examples include:

• Amazon Web Services Elastic Load Balancer

• Microsoft Azure Load Balancer

• Google Cloud Load Balancing

These services automatically scale with traffic demand and reduce operational maintenance significantly.

Advantages of Cloud Load Balancers

Cloud-native balancing simplifies infrastructure management dramatically. Teams avoid maintaining hardware, patching software, or manually scaling networking infrastructure.

Managed cloud balancing also integrates with autoscaling groups, monitoring systems, certificates, and cloud security tools.

Disadvantages of Cloud Load Balancers

Organizations become dependent on cloud vendor infrastructure and pricing models.

Advanced customization may also be more limited compared to self-managed solutions.

Load Balancing Algorithms

Different load balancers distribute traffic using different algorithms.

Round Robin

Requests rotate sequentially across servers.

Example flow:

S1 → S2 → S3

This approach is simple and works well when all servers have similar capacity.

Least Connections

Traffic routes to the server currently handling the fewest active connections.

This strategy performs better when requests have variable processing times.

IP Hash

Requests from the same client IP consistently route to the same backend server.

This helps maintain session consistency for stateful applications.

Weighted Routing

Servers receive traffic proportional to their hardware capacity or performance characteristics.

Stronger servers handle more requests than weaker ones.

Health Checks

Modern load balancers continuously monitor backend servers using health checks.

Typical checks include:

• HTTP response validation

• TCP connection tests

• Database connectivity verification

• Custom application probes

If a server becomes unhealthy, traffic automatically redirects elsewhere.

C# Reverse Proxy Example

A simple reverse proxy can be implemented in ASP.NET Core.

// Proxy Middleware Example

app.Run(async context =>

{

var targetUrl = "https://backend-server/api";

using var httpClient = new HttpClient();

var response = await httpClient.GetAsync(targetUrl);

var content = await response.Content.ReadAsStringAsync();

context.Response.StatusCode = (int)response.StatusCode;

await context.Response.WriteAsync(content);

});

This example forwards requests to another backend service.

C# Load Balancing Example

// Simple Round Robin Balancer

public class RoundRobinBalancer

{

private readonly List<string> _servers = new()

{

"https://server1",

"https://server2",

"https://server3"

};

private int _current;

public string GetNextServer()

{

var server = _servers[_current];

_current = (_current + 1) % _servers.Count;

return server;

}

}

This implementation cycles requests across multiple backend servers.

Best Real-World Use Cases

E-Commerce Platforms

Online stores experience unpredictable traffic spikes during campaigns and seasonal events. Load balancers distribute traffic across multiple application servers to maintain uptime and prevent overload.

They also help isolate failing servers automatically during heavy traffic periods.

Streaming Platforms

Video streaming services require massive traffic distribution across content delivery systems and media servers. Load balancing helps minimize latency while supporting millions of simultaneous viewers.

Geographic routing also improves performance for global audiences.

Banking and Financial Systems

Financial applications require high availability and fault tolerance because downtime directly affects transactions and customer trust.

Load balancers help ensure uninterrupted service while supporting rolling deployments and disaster recovery strategies.

Common Mistakes About Load Balancing

Assuming Load Balancing Alone Solves Scalability

Load balancers distribute traffic, but they do not automatically optimize databases, caching layers, or application performance.

True scalability requires improvements across the entire architecture.

Ignoring Session Management

Stateful applications may break when requests route unpredictably across servers.

Sticky sessions or distributed session storage may be required depending on application design.

Missing Health Checks

Without proper health monitoring, traffic may continue routing to failing servers.

Reliable health checks are critical for high availability systems.

Alternative Approaches

Anycast Routing

Some global systems route traffic geographically using Anycast networking instead of traditional centralized load balancing.

This improves latency for worldwide users.

Service Mesh Architecture

Modern Kubernetes systems increasingly use service meshes such as Istio for traffic routing, observability, and resilience.

Service meshes provide advanced routing capabilities inside distributed microservice environments.

CDN-Based Traffic Distribution

Content Delivery Networks distribute static assets globally while reducing pressure on backend systems.

This complements traditional load balancing strategies effectively.

Final Thoughts

Load balancers are one of the most important building blocks in scalable distributed systems. They improve reliability, scalability, fault tolerance, and operational flexibility by distributing traffic intelligently across infrastructure resources.

For C# developers working with ASP.NET Core, cloud infrastructure, microservices, or high-traffic APIs, understanding load balancer types and routing strategies is essential for designing resilient production-grade systems.