LRU Cache Implementation in C# with Examples and Best Practices

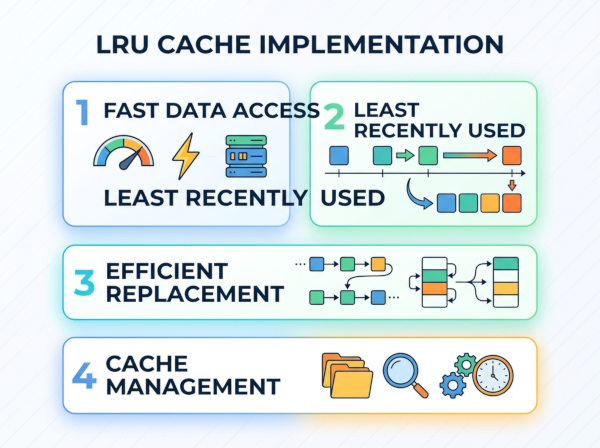

An LRU (Least Recently Used) cache is a data structure that automatically removes the least recently accessed item when the cache reaches its maximum capacity.

An LRU cache stores frequently used data in memory so applications can access it quickly without recalculating or reloading it from slower resources such as databases, APIs, or disks. The main idea is simple: if an item was used recently, it is likely to be used again soon.

The cache has a fixed size. When the cache becomes full and a new item needs to be added, the system removes the item that has not been used for the longest time. This keeps the cache efficient while preventing unlimited memory growth.

An LRU cache usually supports two main operations:

• Get(key) → retrieves an item from the cache

• Put(key, value) → adds or updates an item in the cache

A well-designed LRU cache performs both operations in constant time, which means O(1) complexity.

Why We Use LRU Cache?

LRU caching exists to improve application performance and reduce expensive operations. Instead of repeatedly fetching the same data from a slow source, the application temporarily stores recently used results in memory.

For example, imagine an e-commerce website where product details are requested thousands of times per minute. Without caching, every request would hit the database directly. With an LRU cache, recently viewed products stay in memory, dramatically reducing database load and improving response time.

LRU is especially useful because it follows real-world usage patterns. In most systems, recently used data is more likely to be used again. This principle is called "temporal locality."

How LRU Cache Works?

An LRU cache internally tracks usage order. Whenever an item is accessed, it becomes the "most recently used" item.

Suppose the cache size is 3:

Cache: [A, B, C]

Access B:

Cache becomes: [A, C, B]

Add D:

A is removed because it is the least recently used.

Cache becomes: [C, B, D]

The cache continuously updates this order whenever items are read or inserted.

Best Use Cases

Database Query Caching

Database queries are expensive compared to memory lookups. An LRU cache can store recently executed query results, reducing database pressure and improving response speed.

For example, a reporting dashboard may repeatedly request the same sales statistics during a short period. Instead of recalculating the results each time, the cache serves them directly from memory.

API Response Caching

External APIs may have rate limits, latency, or usage costs. LRU caching helps avoid repeated requests for the same data.

For instance, a weather application could cache recent city forecasts for several minutes. If many users request the same city, the application avoids unnecessary API calls.

Session Management

Applications often store temporary user session data such as authentication details or preferences. LRU caching helps keep active users in memory while automatically removing inactive sessions.

This approach prevents uncontrolled memory usage in systems with millions of users.

Image or File Caching

Applications that repeatedly load images, thumbnails, or static files can benefit heavily from LRU caching.

Streaming platforms, design tools, and content delivery systems commonly keep recently accessed media files in memory to reduce disk access time.

Machine Learning Feature Caching

Machine learning pipelines frequently recompute expensive feature transformations. An LRU cache can store recently calculated features for active users or datasets.

This reduces CPU usage and improves inference speed in recommendation systems or fraud detection systems.

Implementation Details of LRU Cache

A proper LRU cache typically combines two data structures:

HashMap (Dictionary)

A dictionary provides fast lookup by key.

Key -> Node

This allows the cache to locate items instantly.

Doubly Linked List

A doubly linked list maintains usage order.

• Most recently used items stay at the front

• Least recently used items stay at the back

When an item is accessed:

• remove it from its current position

• move it to the front

When eviction is needed:

• remove the item at the back

Why These Two Structures Together?

A dictionary alone cannot efficiently track usage order.

A linked list alone cannot efficiently find items by key.

Combining both gives:

• O(1) lookup

• O(1) insertion

• O(1) deletion

• O(1) eviction

This is the standard interview-quality LRU implementation.

C# LRU Cache Example

Basic Generic Implementation

using System;

using System.Collections.Generic;

public class LRUCache<TKey, TValue>

{

private readonly int _capacity;

private readonly Dictionary<TKey, LinkedListNode<CacheItem>> _cache;

private readonly LinkedList<CacheItem> _usageList;

public LRUCache(int capacity)

{

_capacity = capacity;

_cache = new Dictionary<TKey, LinkedListNode<CacheItem>>();

_usageList = new LinkedList<CacheItem>();

}

public TValue Get(TKey key)

{

if (!_cache.ContainsKey(key))

{

return default;

}

var node = _cache[key];

_usageList.Remove(node);

_usageList.AddFirst(node);

return node.Value.Value;

}

public void Put(TKey key, TValue value)

{

if (_cache.ContainsKey(key))

{

var existingNode = _cache[key];

_usageList.Remove(existingNode);

existingNode.Value.Value = value;

_usageList.AddFirst(existingNode);

return;

}

if (_cache.Count >= _capacity)

{

var leastUsed = _usageList.Last;

if (leastUsed != null)

{

_cache.Remove(leastUsed.Value.Key);

_usageList.RemoveLast();

}

}

var item = new CacheItem

{

Key = key,

Value = value

};

var newNode = new LinkedListNode<CacheItem>(item);

_usageList.AddFirst(newNode);

_cache[key] = newNode;

}

private class CacheItem

{

public TKey Key { get; set; }

public TValue Value { get; set; }

}

}

Example Usage

var cache = new LRUCache<int, string>(3);

cache.Put(1, "Apple");

cache.Put(2, "Banana");

cache.Put(3, "Orange");

Console.WriteLine(cache.Get(2));

cache.Put(4, "Mango");

Console.WriteLine(cache.Get(1));

Explanation:

• Cache capacity is 3

• Accessing key 2 makes it recently used

• Adding key 4 removes key 1

• Get(1) returns null/default because it was evicted

Thread-Safe LRU Cache Example

In real applications, multiple threads may access the cache simultaneously. Without synchronization, race conditions may occur.

private readonly object _lock = new object();

public TValue Get(TKey key)

{

lock (_lock)

{

// thread-safe logic

}

}

Production systems often use:

• ReaderWriterLockSlim

• concurrent collections

• distributed cache systems

Advantages of LRU Cache

Very Fast Access

A properly implemented LRU cache provides constant-time operations. This makes it ideal for high-performance applications where latency matters.

Applications such as trading systems, gaming servers, and recommendation engines benefit heavily from low-latency access.

Automatic Memory Control

The cache has a fixed size, so memory usage remains predictable. Old data is automatically removed without requiring manual cleanup.

This is especially important for long-running services where uncontrolled memory growth can crash the application.

Excellent Real-World Behavior

LRU works well because recent activity often predicts future activity. Most users repeatedly access the same small subset of data within short periods.

This behavior makes LRU highly effective for web applications, APIs, and user-driven systems.

Disadvantages of LRU Cache

Recently Used Does Not Always Mean Important

Some applications need frequently used data, not recently used data. In those cases, LRU may remove valuable items too early.

For example, a rarely accessed but expensive-to-generate report could be evicted even though regenerating it is costly.

Memory Overhead

The cache requires additional structures such as linked lists and dictionaries. This increases memory consumption compared to simpler approaches.

Large-scale systems with millions of entries must carefully optimize object allocation.

Thread Safety Complexity

Concurrent access introduces synchronization challenges. Poor locking strategies can reduce performance or create deadlocks.

High-throughput systems often require specialized concurrent cache implementations.

Common Mistakes

Using Only a Dictionary

Many developers initially try implementing LRU using only a dictionary. While lookup becomes fast, tracking usage order becomes inefficient.

Without a linked structure, eviction may require scanning the entire collection, increasing complexity to O(n).

Forgetting to Update Usage Order

A common bug is retrieving an item without moving it to the "recently used" position.

This breaks the entire eviction policy because frequently accessed items may still be removed.

Not Handling Capacity Correctly

Some implementations allow the cache to exceed its maximum size temporarily.

This can create memory spikes and inconsistent eviction behavior under heavy load.

Ignoring Thread Safety

Caches are commonly shared between requests or threads. Without synchronization, applications may encounter corrupted state or random failures.

Thread safety becomes critical in ASP.NET applications, background workers, and distributed systems.

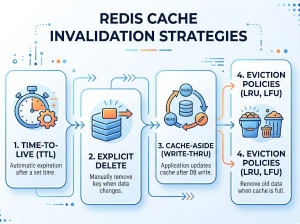

Alternatives to LRU Cache

LFU (Least Frequently Used)

LFU removes items that are accessed the fewest number of times instead of the least recent items.

This strategy works better when long-term popularity matters more than short-term recency. For example, analytics systems or recommendation engines may prefer LFU because frequently requested data stays cached longer.

FIFO (First In First Out)

FIFO removes the oldest inserted item regardless of usage history.

It is simpler and cheaper to implement than LRU, but it may evict highly valuable items that are still actively used.

MRU (Most Recently Used)

MRU removes the most recently used item first.

Although unusual, it can work well in workloads where recently accessed items are unlikely to be reused soon, such as certain sequential scanning operations.

ARC (Adaptive Replacement Cache)

ARC dynamically balances recency and frequency to improve cache hit rates.

It adapts automatically to changing access patterns, making it more intelligent than pure LRU in enterprise-scale systems.

Distributed Caches

Systems such as Redis or Memcached provide network-based caching across multiple servers.

These solutions are ideal for scalable cloud applications where a single in-memory cache is insufficient.

Built-In Caching Options in C#

MemoryCache

.NET already includes built-in caching support through MemoryCache.

using Microsoft.Extensions.Caching.Memory;

var memoryCache = new MemoryCache(

new MemoryCacheOptions()

);

memoryCache.Set("user_1", "John");

var user = memoryCache.Get("user_1");

This is usually preferred in production applications because it already handles:

• expiration

• eviction

• memory pressure

• concurrency

• optimization

Performance Complexity

| Operation | Complexity |

|---|---|

| Get | O(1) |

| Put | O(1) |

| Remove | O(1) |

| Eviction | O(1) |

When You Should NOT Use LRU Cache?

LRU is not always the best solution.

If data changes constantly and cache invalidation becomes difficult, caching may introduce stale data problems. In highly write-heavy systems, maintaining cache consistency can become more expensive than the performance gains.

Similarly, applications with random access patterns may see poor cache hit rates because recently used items do not predict future requests.