Retrieval-Augmented Generation (RAG) in LLMs: Architecture, Use Cases, Advantages, and Alternatives

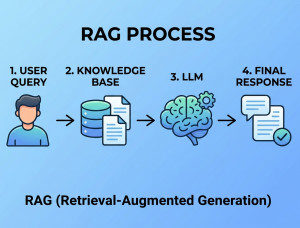

Retrieval-Augmented Generation (RAG) is an AI architecture that enhances Large Language Models (LLMs) by retrieving relevant external information before generating a response.

RAG combines information retrieval systems with generative AI models to produce more accurate, contextual, and up-to-date responses. Instead of relying only on the data used during model training, a RAG system searches external knowledge sources such as documents, databases, APIs, or websites at query time. The retrieved content is added to the user prompt and then passed to the LLM for answer generation. This approach reduces hallucinations and allows AI systems to answer questions using private or frequently changing data. RAG is widely used in enterprise chatbots, knowledge assistants, document search systems, and AI customer support platforms.

Why We Use RAG?

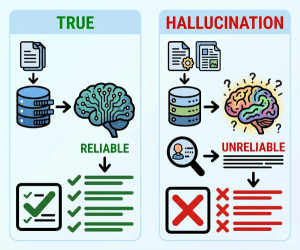

We use RAG because standard LLMs have limitations:

• They may generate incorrect or hallucinated information.

• Their knowledge is limited to training-time data.

• Retraining or fine-tuning models is expensive and slow.

• They cannot naturally access private company documents or live data.

• Enterprises need traceable and source-grounded answers.

RAG solves these problems by dynamically retrieving relevant information during inference.

When Should We Use RAG?

Best Use Cases for RAG:

• Enterprise knowledge assistants

• Customer support chatbots

• Internal document search systems

• Legal and compliance document querying

• Medical knowledge assistants

• Financial research tools

• AI coding assistants with documentation lookup

• Research and academic search systems

• Real-time news or live-data assistants

• Multi-document question answering

When NOT to Use RAG?

• Simple tasks that do not require external knowledge

• Applications requiring ultra-low latency responses

• Small datasets that can fit directly into prompts

• Highly deterministic workflows where rule-based systems are better

• Cases where retrieved data quality is poor or inconsistent

• Applications where fine-tuning alone is sufficient

Key Components of RAG

| Component | Description |

|---|---|

| Data Source | Documents, databases, APIs, websites, PDFs, or internal knowledge bases. |

| Document Loader | Extracts and imports content from multiple sources. |

| Chunking | Splits large documents into smaller searchable pieces. |

| Embedding Model | Converts text into vector representations. |

| Vector Database | Stores embeddings for semantic similarity search. |

| Retriever | Finds the most relevant chunks for a user query. |

| Prompt Augmentation | Adds retrieved context into the LLM prompt. |

| LLM Generator | Generates the final response using retrieved context. |

Key Features of RAG

| Feature | Benefit |

|---|---|

| External Knowledge Access | Uses information beyond training data. |

| Real-Time Information | Supports frequently updated content. |

| Reduced Hallucinations | Improves factual accuracy. |

| Source Grounding | Responses can reference retrieved documents. |

| Scalability | Works with large enterprise datasets. |

| Domain Adaptability | Easy integration with industry-specific data. |

| Cost Efficiency | Avoids expensive model retraining. |

Implementation Examples of RAG

Example 1: Enterprise Knowledge Chatbot

Workflow:

1. Employee asks a question.

2. Retriever searches company documents.

3. Relevant text chunks are retrieved.

4. LLM generates a grounded response.

Technologies:

• OpenAI GPT models

• LangChain

• Pinecone or Weaviate

• PostgreSQL

• Elasticsearch

Example 2: PDF Question-Answering System

Workflow:

1. Upload PDF files.

2. Extract text and split into chunks.

3. Create embeddings.

4. Store vectors in a vector database.

5. Retrieve relevant chunks for user questions.

6. Generate answers using the LLM.

Example 3: Customer Support Assistant

The chatbot retrieves:

• Product manuals

• FAQs

• Troubleshooting guides

• Support tickets

This improves response quality and reduces hallucinations.

Advantages of RAG

| Advantage | Explanation |

|---|---|

| More Accurate Responses | Uses retrieved evidence during generation. |

| Up-to-Date Knowledge | Can access current information dynamically. |

| No Frequent Retraining | Knowledge updates happen in the data layer. |

| Supports Private Data | Works with internal enterprise documents. |

| Better Explainability | Retrieved sources can be shown to users. |

| Lower Training Cost | Reduces dependency on expensive fine-tuning. |

Disadvantages of RAG

| Disadvantage | Explanation |

|---|---|

| System Complexity | Requires retrieval pipelines and vector databases. |

| Latency | Retrieval adds extra processing time. |

| Retrieval Dependency | Poor retrieval quality leads to poor answers. |

| Chunking Challenges | Bad chunking reduces retrieval effectiveness. |

| Infrastructure Cost | Needs embedding storage and search infrastructure. |

| Context Window Limits | Too much retrieved text may exceed token limits. |

Alternatives of RAG (Compared with Similar Technologies)

| Technology | Description | Compared to RAG |

|---|---|---|

| Fine-Tuning | Retrains the model on custom datasets. | Better for behavior/style learning, but expensive and harder to update than RAG. |

| Prompt Engineering | Improves outputs using carefully designed prompts. | Simpler but cannot provide new external knowledge dynamically. |

| Long-Context LLMs | Models with very large context windows. | Can process large inputs directly but may become expensive and inefficient at scale. |

| Knowledge Graphs | Structured relationship-based data systems. | More precise reasoning but harder to maintain and scale. |

| Traditional Search Engines | Keyword or semantic document retrieval systems. | Good retrieval but lacks natural language generation. |

| Agentic AI Systems | Autonomous systems using tools and reasoning loops. | More powerful for multi-step tasks, but often still use RAG internally. |

Summary

RAG is one of the most important architectures for modern AI applications because it combines the reasoning and language generation capabilities of LLMs with real-time knowledge retrieval. It improves factual accuracy, enables access to private or updated information, and reduces dependence on expensive retraining. While RAG introduces additional infrastructure complexity and latency, it is highly effective for enterprise AI, search assistants, customer support, and knowledge-intensive applications.