LLM Hallucination Explained: Causes, Prevention Methods, and Reduction Techniques in AI Systems

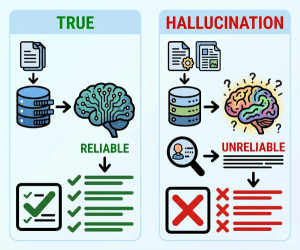

LLM hallucination is the phenomenon where a Large Language Model (LLM) generates false, misleading, fabricated, or logically incorrect information while presenting it as factual.

Large Language Models generate responses by predicting the most probable next tokens based on patterns learned during training, not by verifying factual correctness. Because of this probabilistic generation process, LLMs can sometimes invent facts, citations, code, names, statistics, or explanations that appear convincing but are incorrect. Hallucinations can occur due to missing context, ambiguous prompts, outdated training data, insufficient retrieval grounding, or limitations in reasoning capabilities. These errors are especially problematic in high-risk domains such as healthcare, finance, law, cybersecurity, and scientific research. Reducing hallucinations is one of the most important challenges in modern generative AI systems.

Why Do LLM Hallucinations Happen?

LLM hallucinations occur because language models are optimized for fluent text generation rather than guaranteed factual accuracy.

Common causes include:

• Lack of real-time knowledge

• Ambiguous or poorly written prompts

• Missing contextual information

• Weak retrieval systems in RAG pipelines

• Insufficient training data coverage

• Conflicting information during training

• Token prediction limitations

• Long-context confusion

• Overconfidence in probabilistic generation

• Domain-specific knowledge gaps

Types of LLM Hallucinations

| Hallucination Type | Description | Example |

|---|---|---|

| Factual Hallucination | Generates incorrect facts or information. | Inventing fake historical events. |

| Citation Hallucination | Creates fake references or sources. | Non-existent research papers. |

| Logical Hallucination | Produces reasoning errors or contradictions. | Incorrect mathematical reasoning. |

| Code Hallucination | Generates invalid or non-existent APIs/functions. | Imaginary Python libraries. |

| Contextual Hallucination | Misunderstands user intent or context. | Answering a different question. |

Why Hallucination is a Serious Problem?

Major Risks

• Spreading misinformation

• Incorrect business decisions

• Legal and compliance issues

• Medical or financial harm

• Security vulnerabilities in generated code

• Loss of user trust

• Reduced enterprise AI reliability

High-Risk Industries

• Healthcare

• Finance

• Legal services

• Cybersecurity

• Scientific research

• Government systems

How to Reduce LLM Hallucination?

Most Effective Techniques

| Technique | How It Reduces Hallucination |

|---|---|

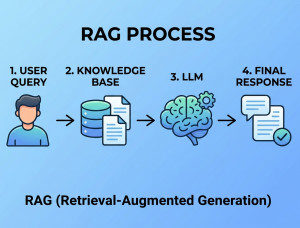

| Retrieval-Augmented Generation (RAG) | Provides external factual context during generation. |

| Prompt Engineering | Improves clarity and constrains model responses. |

| Grounding | Forces responses to rely on trusted data sources. |

| Fine-Tuning | Improves domain-specific knowledge accuracy. |

| Output Verification | Uses secondary validation systems or fact-checking. |

| Smaller Response Scope | Reduces speculative answer generation. |

| Human-in-the-Loop | Adds expert review for critical outputs. |

| Temperature Tuning | Lower temperature reduces randomness. |

| Structured Prompting | Encourages step-by-step reasoning. |

| Knowledge Base Integration | Connects the model to authoritative enterprise data. |

Best Practices to Minimize Hallucination

| Best Practice | Benefit |

|---|---|

| Use RAG Architecture | Improves factual grounding. |

| Provide Clear Prompts | Reduces ambiguity. |

| Use Trusted Data Sources | Improves reliability. |

| Limit Open-Ended Questions | Reduces speculative responses. |

| Enable Source Citations | Improves transparency. |

| Validate Critical Outputs | Prevents high-risk mistakes. |

| Monitor Model Performance | Detects hallucination patterns. |

When Should We Focus Strongly on Hallucination Reduction?

Critical Use Cases

• Medical diagnosis assistants

• Financial advisory systems

• Legal document analysis

• Enterprise knowledge assistants

• AI coding assistants

• Research and scientific tools

• Government and compliance systems

Lower-Risk Use Cases

• Creative writing

• Brainstorming

• Marketing content drafts

• Entertainment chatbots

• Fiction generation

Key Components in Hallucination Reduction Systems

| Component | Role |

|---|---|

| Retriever | Fetches trusted contextual information. |

| Vector Database | Stores searchable embeddings. |

| Fact Checker | Validates generated outputs. |

| Prompt Template | Constrains generation behavior. |

| Confidence Scoring | Estimates output reliability. |

| Human Reviewer | Verifies high-risk responses. |

Advantages of Hallucination Reduction Techniques

| Advantage | Explanation |

|---|---|

| Improved Accuracy | Reduces false information generation. |

| Higher User Trust | Users rely more on grounded AI outputs. |

| Better Enterprise Adoption | Supports business-critical AI systems. |

| Reduced Legal Risk | Lowers misinformation liability. |

| Safer AI Systems | Minimizes harmful outputs. |

Disadvantages of Hallucination Reduction Techniques

| Disadvantage | Explanation |

|---|---|

| Higher Infrastructure Cost | Requires retrieval and validation systems. |

| Increased Latency | Verification and retrieval add processing time. |

| System Complexity | More components increase maintenance difficulty. |

| Not Fully Eliminated | No method guarantees zero hallucination. |

| Potential Over-Restriction | Strong constraints may reduce creativity. |

Alternatives and Complementary Approaches

| Approach | Description | Compared to Hallucination Reduction via RAG |

|---|---|---|

| Fine-Tuning | Trains models on domain-specific datasets. | Improves specialization but may still hallucinate. |

| Rule-Based AI | Uses deterministic logic systems. | Highly reliable but less flexible. |

| Knowledge Graphs | Structured factual relationship systems. | Better factual consistency but harder to maintain. |

| Long-Context Models | Processes larger documents directly. | Useful but still prone to reasoning errors. |

| Human Validation | Manual expert review process. | Most reliable but not scalable. |

Summary

LLM hallucination occurs when AI models generate inaccurate or fabricated information while sounding confident and convincing. It is a major challenge in generative AI systems because LLMs predict text probabilistically rather than verifying truthfulness. Techniques such as RAG, grounding, prompt engineering, fine-tuning, and output verification significantly reduce hallucination risk and improve reliability. Although hallucinations cannot currently be eliminated completely, modern AI architectures can greatly minimize them through retrieval systems, validation pipelines, and human oversight.