Fine Tuning in LLM

Fine-tuning is the process of taking a pretrained model (trained on massive, general data) and training it further on a smaller, targeted dataset.

Instead of starting from scratch, you “nudge” the model to behave in a certain way.

Example:

• Base model → knows general language

• Fine-tuned model → writes legal contracts or answers medical questions in a specific style

Why do we use fine tuning?

Pretrained models are:

• Broad

• General-purpose

• Not aligned to specific needs

Fine-tuning helps:

• Specialize behavior (e.g., customer support tone)

• Inject domain knowledge (legal, finance, medicine)

• Control outputs (format, style, safety)

• Adapt to internal/company data

Without fine-tuning, you’d rely only on prompting—which has limits.

Who uses fine tuning?

• AI companies (OpenAI, Google, Meta) → align base models

• Enterprises → adapt models to internal workflows

• Startups → build niche tools (legal AI, coding assistants, etc.)

• Researchers → experiment with new capabilities

Developers often use tools like:

• PyTorch

• TensorFlow

• Hugging Face Transformers

Types of fine-tuning

1. Supervised fine-tuning (SFT)

Train on input → ideal output pairs

Example:

• User: Explain black holes simply

• Assistant: (high-quality explanation)

Teaches the model “this is what a good answer looks like”

2. Reinforcement Learning from Human Feedback

• Humans rank outputs

• Model learns preferences (helpful, safe, polite)

This is how models become more aligned with human expectations

3. Instruction tuning

Special case of SFT

Focused on following instructions well

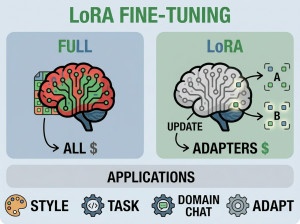

4. Parameter-efficient fine-tuning (PEFT)

Only adjust small parts of the model (e.g., LoRA)

Much cheaper than full retraining

Real-life analogy of Fine Tuning

1. University → job training

Pretraining = getting a general education

Fine-tuning = learning your specific job

2. Hiring a chef

Base model = chef who knows all cuisines

Fine-tuning = training them to cook your restaurant’s menu

Alternatives of Fine Tuning

Fine-tuning isn’t the only way to adapt models:

1. Prompt engineering

Give better instructions instead of retraining

Fast, cheap, but less consistent

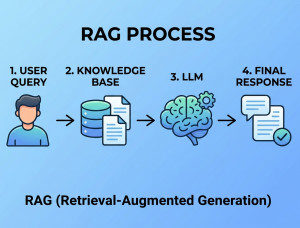

2. Retrieval-Augmented Generation (RAG)

Feed external data at runtime instead of training

Great for up-to-date or private data

3. System prompts / context injection

Control behavior via initial instructions

Lightweight but limited depth

When fine-tuning is better?

Use it when you need:

• Consistent tone/style

• Domain-specific expertise

• Structured outputs (e.g., JSON, legal format)

• Behavior that prompting alone can’t reliably enforce

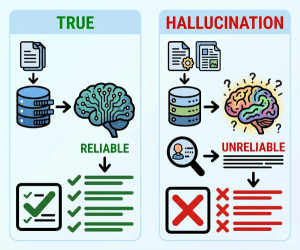

Downsides / Disadvantages of Fine Tuning

• Costly (compute + data preparation)

• Risk of overfitting (too narrow behavior)

• Maintenance (needs updates as data changes)

• Catastrophic forgetting (can lose general knowledge if done poorly)