OpenAI API vs Open Source LLMs: Differences, Features, Use Cases, Advantages, and Comparison

What is OpenAI API?

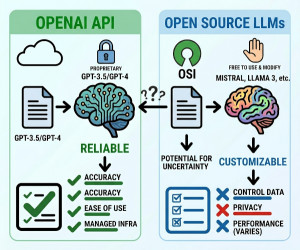

The OpenAI API is a cloud-based service that allows developers to access powerful AI models such as GPT through HTTP APIs without managing the underlying infrastructure.

The OpenAI API provides access to pretrained Large Language Models (LLMs) hosted and managed by OpenAI. Developers can integrate capabilities such as text generation, reasoning, embeddings, image generation, speech processing, and tool calling into applications using simple API requests. Since the models run on OpenAI’s infrastructure, developers do not need to handle GPU servers, scaling, optimization, or model training. The API supports enterprise-grade performance, security, and continuous model improvements. It is commonly used for AI chatbots, coding assistants, document analysis, customer support systems, and AI automation tools.

What is an Open Source LLM?

An open source LLM is a language model whose weights, architecture, or training components are publicly available for developers to run, customize, fine-tune, and deploy independently.

Open source LLMs allow organizations and developers to host and control AI models on their own infrastructure. These models can be downloaded, fine-tuned, modified, and optimized for specific domains or enterprise requirements. Popular open source LLMs include Llama, Mistral, DeepSeek, Gemma, Falcon, and Qwen. Unlike hosted APIs, open source models require GPU infrastructure, deployment pipelines, inference optimization, and maintenance. They are widely used when privacy, customization, offline deployment, or cost control are critical.

Why We Use OpenAI API?

Main Reasons

• Fast AI integration

• No infrastructure management

• Access to advanced models

• Enterprise scalability

• Continuous model updates

• High-quality reasoning and generation

• Easy developer experience

• Reliable managed service

Why We Use Open Source LLMs?

Main Reasons

• Full model ownership and control

• Data privacy and compliance

• Offline deployment capability

• Custom fine-tuning

• Lower long-term inference cost at scale

• Vendor independence

• Flexible architecture customization

• Research experimentation

Most Popular Open Source LLMs

| Model | Organization | Main Strength |

|---|---|---|

| Llama | Meta | Strong ecosystem and performance. |

| Mistral | Mistral AI | Efficient and high-quality reasoning. |

| DeepSeek | DeepSeek AI | Strong coding and reasoning performance. |

| Gemma | Lightweight deployment options. | |

| Qwen | Alibaba Cloud | Multilingual capabilities. |

| Falcon | Technology Innovation Institute | Open research accessibility. |

OpenAI API vs Open Source LLMs

| Feature | OpenAI API | Open Source LLMs |

|---|---|---|

| Hosting | Managed by OpenAI | Self-hosted or cloud-hosted |

| Infrastructure Management | Not required | Required |

| Ease of Use | Very easy | More complex |

| Customization | Limited | High |

| Fine-Tuning Flexibility | Limited compared to self-hosting | Full control |

| Data Privacy Control | Depends on provider policies | Full internal control |

| Performance Optimization | Managed automatically | Requires engineering effort |

| Latency Optimization | Optimized by provider | Depends on deployment quality |

| Scaling | Automatic | Self-managed |

| Cost Model | Pay-per-use API pricing | Infrastructure and operational cost |

| Offline Usage | No | Yes |

| Vendor Lock-In | Higher | Lower |

When Should We Use OpenAI API?

Best Use Cases

• Fast MVP development

• SaaS applications

• AI chatbots

• AI coding assistants

• Small engineering teams

• Rapid prototyping

• Applications needing top-tier reasoning

• Startups with limited infrastructure resources

When NOT to Use OpenAI API?

• Strict data sovereignty requirements

• Air-gapped or offline systems

• Extremely high-scale inference workloads with cost sensitivity

• Deep model customization requirements

• Environments requiring full model transparency

When Should We Use Open Source LLMs?

Best Use Cases

• Enterprise private deployments

• Healthcare and regulated industries

• Government systems

• On-premise AI infrastructure

• AI research

• Domain-specific fine-tuning

• Offline AI systems

• Large-scale inference optimization

When NOT to Use Open Source LLMs?

• Small teams without ML infrastructure expertise

• Fast MVP requirements

• Projects with limited GPU resources

• Applications needing minimal operational overhead

• Teams without DevOps or MLOps capabilities

Key Components of OpenAI API Systems

| Component | Role |

|---|---|

| API Gateway | Handles requests and authentication. |

| Hosted LLM | Processes prompts and generates outputs. |

| Embedding Models | Creates vector embeddings. |

| Safety Layer | Applies moderation and security policies. |

| Tool Calling System | Connects external functions and APIs. |

Key Components of Open Source LLM Systems

| Component | Role |

|---|---|

| Model Weights | Core pretrained neural network parameters. |

| Inference Engine | Runs model inference efficiently. |

| GPU Infrastructure | Provides computational power. |

| Fine-Tuning Pipeline | Customizes models for specific tasks. |

| Deployment Layer | Serves the model to applications. |

| Monitoring System | Tracks performance and reliability. |

Advantages of OpenAI API

| Advantage | Explanation |

|---|---|

| Fast Integration | Simple API-based development. |

| No Infrastructure Management | OpenAI manages scaling and optimization. |

| High Performance | Access to advanced frontier models. |

| Continuous Improvements | Models improve without deployment changes. |

| Enterprise Reliability | Managed uptime and infrastructure. |

Disadvantages of OpenAI API

| Disadvantage | Explanation |

|---|---|

| Vendor Dependency | Relies on external provider infrastructure. |

| Recurring API Cost | Usage-based pricing can grow significantly. |

| Limited Internal Control | Less control over model internals. |

| Internet Dependency | Requires network connectivity. |

| Policy Restrictions | Must comply with provider usage policies. |

Advantages of Open Source LLMs

| Advantage | Explanation |

|---|---|

| Full Customization | Complete control over model behavior. |

| Data Privacy | Data remains within internal infrastructure. |

| Offline Capability | Works without internet access. |

| Reduced Vendor Lock-In | Greater deployment independence. |

| Fine-Tuning Flexibility | Supports deep domain adaptation. |

Disadvantages of Open Source LLMs

| Disadvantage | Explanation |

|---|---|

| Infrastructure Complexity | Requires GPU servers and deployment pipelines. |

| Operational Overhead | Monitoring and scaling must be managed internally. |

| Performance Tuning | Optimization requires ML engineering expertise. |

| Hardware Cost | GPU infrastructure can be expensive. |

| Maintenance Responsibility | Updates and security are self-managed. |

Alternatives and Hybrid Approaches

| Approach | Description | Compared to OpenAI API and Open Source LLMs |

|---|---|---|

| Managed Open Source Hosting | Cloud providers host open source models. | Combines easier operations with model flexibility. |

| Hybrid AI Architecture | Uses both APIs and local models together. | Balances cost, privacy, and performance. |

| Fine-Tuned Smaller Models | Specialized lightweight custom models. | Cheaper inference but narrower capabilities. |

| Agentic AI Systems | AI systems using tools and workflows. | Can operate with both hosted and open models. |

Summary

The OpenAI API and open source LLMs represent two major approaches to deploying AI systems. The OpenAI API offers simplicity, scalability, and access to advanced managed models, making it ideal for rapid development and production-ready applications. Open source LLMs provide greater customization, privacy, and infrastructure control, making them suitable for enterprise, regulated, and offline environments. The best choice depends on factors such as scalability, compliance, operational expertise, infrastructure budget, latency requirements, and long-term AI strategy.