Transformer architecture in LLM

The Transformer is a type of neural network architecture designed to process sequences (like text) by looking at all parts of the input at once rather than step-by-step.

Its defining idea is the Attention mechanism, which lets the model weigh how important each word is relative to others in the same sentence.

Instead of reading like a human (left → right), a Transformer sees the whole sentence simultaneously and figures out relationships between words in parallel.

Why do we use transformers?

Before Transformers, models like RNNs and LSTMs processed text sequentially. That caused problems:

• Slow training (no parallelism)

• Difficulty remembering long-range context (“the subject from 20 words ago”)

• Gradient issues (forgetting earlier info)

Transformers solve this by:

• Parallel processing → much faster training on GPUs

• Better context handling → can link distant words easily

• Scalability → works extremely well when scaled to billions of parameters

This is why essentially all modern LLMs (GPT, Claude, etc.) are Transformer-based.

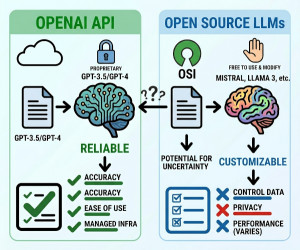

Who needs and uses transformers?

Pretty much everyone working with modern AI:

• Tech companies: OpenAI, Google, Meta, Anthropic

• Startups: building chatbots, copilots, search tools

• Researchers: pushing state-of-the-art NLP and multimodal models

• Developers: via libraries like PyTorch or TensorFlow

• Even non-NLP fields: vision (ViTs), audio, biology (protein folding)

Key components (intuitive view) of Transformers

A Transformer is built from repeating blocks. Inside each block:

• Self-attention → “Which words should I focus on?”

• Feedforward layers → “What do I compute from that information?”

• Positional encoding → “What order are the words in?”

Think of it like: Read everything → decide what matters → process → repeat many times

Real-life analogy of Transformers

Imagine you’re in a meeting reading a long email thread:

• Instead of reading message by message,

• You scan the entire thread at once,

• You highlight important parts,

• And connect related points across the whole discussion.

That’s what a Transformer does with text.

Another analogy: It’s like having perfect cross-referencing ability—every word can instantly look at every other word.

Alternatives (and how they differ) of Transformers

1. RNNs (Recurrent Neural Networks)

• Process text step-by-step

• Good for short sequences

• Struggle with long context

Analogy: reading a book one word at a time with limited memory

2. LSTMs (Long Short-Term Memory networks)

• Improved RNNs with memory gates

• Better at longer dependencies than vanilla RNNs

• Still sequential and slower

Analogy: reading sequentially but taking occasional notes

3. CNNs for text

• Use local patterns (like n-grams)

• Fast but limited global understanding

Analogy: spotting phrases but missing overall meaning

Why Transformers won

They combine:

• Global context awareness

• Parallel computation

• Strong scaling behavior

That combination turned out to dominate everything else.

Downsides / limitations of Transformers

Transformers aren’t perfect:

• Expensive (compute + memory heavy)

• Context window limits (can’t handle infinite text)

• Quadratic complexity (attention cost grows fast with input length)

This is why newer research explores efficient variants (like sparse attention, linear attention).