Prompt engineering in LLM

If fine-tuning is changing the model, prompt engineering is about getting better results without changing the model at all.

Prompt engineering is the practice of designing inputs (prompts) so a language model produces the desired output.

A “prompt” isn’t just a question—it can include:

• Instructions

• Examples

• Constraints

• Formatting rules

• Context

Weak prompt example: Explain AI

Engineered prompt: Explain artificial intelligence in simple terms for a 12-year-old. Use 3 short paragraphs and a real-world analogy.

Same model → very different output.

Why do we use prompt engineering?

Because LLMs are highly sensitive to input phrasing.

Prompt engineering helps you:

• Get more accurate answers

• Control tone and structure

• Reduce ambiguity

• Avoid unnecessary tokens (cost)

• Improve reliability without retraining

It’s often the fastest and cheapest way to improve results.

Who uses prompt engineering?

Everyone using LLMs seriously

• Developers

• Product teams

• Analysts

• Writers

• AI engineers building tools and agents

• Non-technical users refining outputs in daily workflows

It’s one of the rare AI skills that’s both:

• beginner-friendly

• and deeply sophisticated at scale

Core techniques of Prompt engineering

1. Clear instructions

Be explicit about what you want: Summarize this in 3 bullet points.

2. Role prompting

Assign a role: You are a senior software engineer. Review this code.

3. Few-shot prompting

Provide examples:

Input: 2+2 → Output: 4

Input: 3+5 → Output: 8

Input: 7+6 → Output:

4. Chain-of-thought prompting

Encourage step-by-step reasoning: Explain your reasoning step by step.

5. Output constraints

Control format: Return the answer as valid JSON.

Real-life analogy of Prompt engineering

1. Giving instructions to a human

• Vague: “Do this report”

• Clear: “Write a 1-page summary with 3 key insights and a conclusion”

Better instructions → better results.

2. Google search (but smarter)

• Bad query → irrelevant results

• Well-phrased query → exactly what you need

Prompting is like talking to a very literal, very powerful assistant.

Relationship of Prompt engineering to other concepts

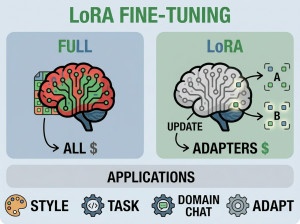

Fine-tuning

Prompt engineering = no training, instant

Fine-tuning = training required, more consistent

Prompting is usually tried first

Tokenization

Prompts are turned into tokens

Small wording changes → different token splits → different outputs

Attention mechanism

Your prompt influences what the model “pays attention” to

Alternatives / complements of Prompt engineering

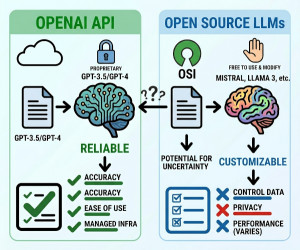

Prompt engineering is often combined with:

1. RAG (Retrieval-Augmented Generation)

Add external knowledge dynamically

2. System prompts

Set global behavior (tone, rules)

3. Fine-tuning

When prompting alone isn’t enough

Downsides / Disadvantages of Prompt engineering

• Not always reliable (can vary across runs)

• Trial-and-error heavy

• Model-dependent (what works on one model may not on another)

• Limited control compared to training