Embeddings in AI Explained: How Embeddings Work, Use Cases, and Applications in LLMs

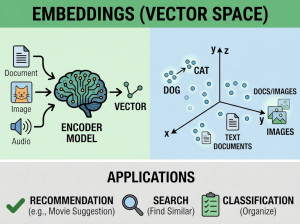

An embedding is a numerical vector representation of data that captures its semantic meaning so machines can compare and process similar content mathematically.

Embeddings convert text, images, audio, or other data into high-dimensional numerical vectors that AI models can understand and compare. Similar meanings produce vectors that are mathematically closer together in vector space, enabling semantic similarity search instead of simple keyword matching. Embeddings are generated using neural networks trained to learn relationships between words, sentences, documents, or multimedia content. They are a foundational technology behind semantic search, recommendation systems, Retrieval-Augmented Generation (RAG), clustering, and personalization systems. Modern AI applications rely heavily on embeddings for efficient retrieval and contextual understanding.

How Do Embeddings Work?

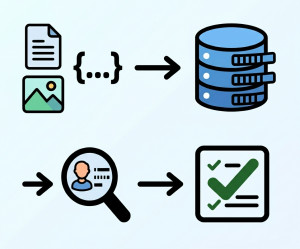

Step-by-Step Embedding Workflow

| Step | Description |

|---|---|

| Input Data | Text, image, audio, or another data type is provided. |

| Neural Network Processing | A trained model processes the input data. |

| Feature Extraction | The model identifies meaningful semantic patterns. |

| Vector Generation | The input is converted into a numerical vector. |

| Vector Storage | The embedding is stored in a vector database. |

| Similarity Search | Mathematical distance calculations find related vectors. |

Example of How Embeddings Work

Suppose we have these sentences:

• "I love dogs."

• "Dogs are amazing pets."

• "The weather is sunny today."

An embedding model converts each sentence into vectors.

Example (simplified):

"I love dogs" → [0.21, 0.88, 0.54, ...]

"Dogs are amazing pets" → [0.25, 0.85, 0.58, ...]

"The weather is sunny today" → [0.91, 0.12, 0.44, ...]

The first two vectors are mathematically closer because they share similar meaning, while the third vector is farther away because the topic is different.

Why We Use Embeddings?

Embeddings solve a major problem in AI: computers do not naturally understand meaning.

We use embeddings because they enable:

• Semantic understanding

• Similarity search

• Recommendation systems

• Efficient retrieval

• Context-aware AI systems

• Better search relevance

• Clustering and classification

• Personalized experiences

• RAG pipelines

• Multimodal AI systems

When Should We Use Embeddings?

Best Use Cases for Embeddings

• Semantic document search

• AI chatbots

• Retrieval-Augmented Generation (RAG)

• Recommendation engines

• Duplicate detection

• Fraud detection

• Image similarity search

• Audio matching systems

• Content clustering

• Personalized ranking systems

• AI memory systems

When NOT to Use Embeddings?

• Exact keyword matching systems

• Traditional transactional databases

• Simple rule-based applications

• Very small datasets

• Deterministic logic systems

• Applications without semantic relationships

Types of Embeddings

| Embedding Type | Description | Common Use Case |

|---|---|---|

| Word Embeddings | Represents individual words. | NLP and language analysis. |

| Sentence Embeddings | Represents full sentences. | Semantic search. |

| Document Embeddings | Represents large text documents. | RAG systems. |

| Image Embeddings | Represents visual features. | Image similarity search. |

| Audio Embeddings | Represents sound patterns. | Speech recognition. |

| Multimodal Embeddings | Combines multiple data types. | Advanced AI systems. |

Key Components of Embedding Systems

| Component | Role |

|---|---|

| Embedding Model | Generates vector representations. |

| Tokenizer | Splits data into processable units. |

| Neural Network | Learns semantic relationships. |

| Vector Database | Stores embeddings for retrieval. |

| Similarity Algorithm | Measures vector closeness. |

| Retriever | Finds semantically related content. |

Key Features of Embeddings

| Feature | Benefit |

|---|---|

| Semantic Understanding | Captures contextual meaning. |

| Similarity Search | Finds related content efficiently. |

| High-Dimensional Representation | Encodes complex relationships. |

| Scalability | Supports large AI datasets. |

| Multimodal Capability | Works with text, images, and audio. |

| Efficient Retrieval | Improves AI response relevance. |

Implementation Examples of Embeddings

Example 1: Semantic Search

Workflow:

• Documents are converted into embeddings.

• Embeddings are stored in a vector database.

• User query is embedded.

• Similar vectors are retrieved.

Result: The system finds semantically related documents even if exact keywords do not match.

Example 2: RAG Pipeline

Workflow:

• Enterprise documents are chunked.

• Chunks are converted into embeddings.

• Vector search retrieves relevant chunks.

• Retrieved context is sent to the LLM.

Result: More accurate and grounded AI responses.

Example 3: Recommendation Engine

Workflow:

• User behavior and products are embedded.

• Similarity search identifies related items.

• Personalized recommendations are generated.

Result: Improved personalization and user engagement.

Most Common Embedding Models

| Embedding Model | Organization | Main Strength |

|---|---|---|

| text-embedding models | OpenAI | High-quality semantic retrieval. |

| Sentence Transformers | Hugging Face | Open-source sentence embeddings. |

| BGE Models | BAAI | Strong multilingual performance. |

| E5 Models | Microsoft | Efficient retrieval optimization. |

| CLIP | OpenAI | Multimodal image-text embeddings. |

Advantages of Embeddings

| Advantage | Explanation |

|---|---|

| Semantic Search | Finds meaning-based matches. |

| Improved AI Context | Enhances relevance and understanding. |

| Scalable Retrieval | Handles large datasets efficiently. |

| Better Recommendations | Improves personalization systems. |

| Multimodal Support | Works across different data types. |

Disadvantages of Embeddings

| Disadvantage | Explanation |

|---|---|

| High Storage Requirements | Large vector datasets consume memory. |

| Computational Cost | Embedding generation can be expensive. |

| Approximate Retrieval | ANN search may reduce precision slightly. |

| Embedding Drift | Model updates can change vector behavior. |

| Complex Infrastructure | Requires vector databases and retrieval systems. |

Alternatives to Embeddings

| Alternative | Description | Compared to Embeddings |

|---|---|---|

| Keyword Search | Exact text matching systems. | Faster but lacks semantic understanding. |

| Knowledge Graphs | Structured relationship databases. | Better reasoning but harder to scale. |

| Rule-Based Systems | Deterministic logic engines. | Reliable but inflexible. |

| Traditional SQL Search | Relational database querying. | Good for structured data, poor for semantic similarity. |

Summary

Embeddings are numerical vector representations that allow AI systems to understand semantic meaning and relationships between data. They are fundamental to modern AI architectures such as semantic search, recommendation systems, vector databases, and Retrieval-Augmented Generation (RAG). Embeddings work by converting input data into high-dimensional vectors where similar meanings are positioned closer together mathematically. This enables AI systems to retrieve relevant information efficiently and provide more intelligent, context-aware responses.