Tokenization in LLM

Tokenization is the process of converting raw text into tokens—the units a model actually processes.

A token is not always a word. It can be:

• a full word → "cat"

• part of a word → "un" + "believ" + "able"

• punctuation → "!"

• even spaces or special symbols

Example: "LLM is amazing!" → ["LLM", " is", " amazing", "!"]

The exact split depends on the tokenizer design.

Why do we use tokenization?

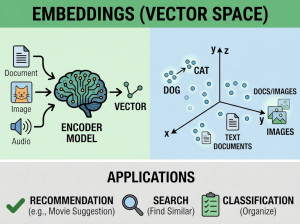

Neural networks don’t understand raw text—they work with numbers.

Tokenization is required to:

• Convert text into discrete units

• Map each token to an ID (number)

• Feed those IDs into the model

But beyond that, tokenization directly affects:

• Cost → pricing is often per token

• Speed → more tokens = more computation

• Understanding → bad splits can confuse meaning

Who uses tokenization?

Anyone interacting with LLMs, even indirectly:

• Model developers (design tokenizers for training)

• API users (optimize prompts to reduce token usage)

• Prompt engineers (structure inputs efficiently)

• Tool builders (chunk documents for retrieval systems)

Libraries that handle this include:

• Hugging Face Transformers

• tiktoken

Common tokenization methods

1. Word-based

Split by spaces: "I love AI" → ["I", "love", "AI"]

Problem: huge vocabulary, can’t handle new words

2. Character-based

"cat" → ["c", "a", "t"]

Problem: too many tokens → inefficient

3. Subword tokenization (most important)

Used in modern LLMs:

• BPE (Byte Pair Encoding)

• WordPiece

• Unigram LM

Example: "unbelievable" → ["un", "believ", "able"]

This balances:

• vocabulary size

• flexibility for unknown words

• Real-life analogy

Think of tokenization like cutting text into Lego pieces:

• Too big (whole words) → you can’t build new shapes easily

• Too small (characters) → too many pieces, slow to build

• Just right (subwords) → flexible and efficient

Or another way:

It’s like how you chunk information when reading—not letter by letter, not entire paragraphs, but meaningful pieces.

Alternatives / variations of tokenization

Even within Transformers, tokenization choices differ:

Byte-level tokenization

Used in some GPT models:

• Works on raw bytes

• Handles any text (including emojis, code)

More robust, but less human-intuitive

SentencePiece (no pre-tokenization)

Doesn’t rely on spaces:

• Good for languages without clear word boundaries

• Token-free approaches (emerging research)

Some newer ideas try to remove tokenization entirely:

• character-level transformers

• continuous representations

Not yet dominant, but interesting direction

Downsides / Disadvantages of tokenization

• Loss of meaning: splitting can distort semantics

• Language bias: works better for some languages than others

• Fragmentation: rare words become long token sequences

• Hard to debug: invisible layer for most users