LoRA Fine-Tuning Explained: How Low-Rank Adaptation Works, Steps, and Examples

LoRA (Low-Rank Adaptation) fine-tuning is a parameter-efficient training technique that adapts Large Language Models (LLMs) by training small low-rank matrices instead of updating all model parameters.

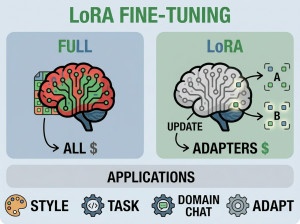

Traditional fine-tuning updates billions of parameters in a Large Language Model, which requires significant GPU memory, storage, and computational cost. LoRA solves this problem by freezing the original pretrained model weights and injecting small trainable low-rank matrices into selected neural network layers. During training, only these lightweight adapter parameters are updated while the base model remains unchanged. This dramatically reduces memory usage, training time, and infrastructure cost while preserving strong performance. LoRA has become one of the most widely used techniques for customizing open source LLMs efficiently.

How Does LoRA Fine-Tuning Work?

Core Idea Behind LoRA

Instead of modifying the original weight matrix directly:

Original Weight Matrix: W

LoRA approximates updates using two smaller matrices:

W + ΔW

Where:

ΔW = A × B

• A = low-rank down projection matrix

• B = low-rank up projection matrix

Only matrices A and B are trained.

The original model weights remain frozen.

Step-by-Step LoRA Workflow

| Step | Description |

|---|---|

| Load Base Model | Import a pretrained LLM such as Llama or Mistral. |

| Freeze Original Weights | Prevent updates to the main model parameters. |

| Inject LoRA Layers | Add trainable low-rank adapter matrices. |

| Prepare Dataset | Create task-specific training data. |

| Train Adapters | Only LoRA parameters are optimized. |

| Save Adapter Weights | Store lightweight LoRA checkpoint files. |

| Inference | Combine base model with LoRA adapters during generation. |

Why We Use LoRA Fine-Tuning?

LoRA is used because full fine-tuning of modern LLMs is extremely expensive.

Main reasons include:

• Lower GPU memory usage

• Faster training

• Reduced storage requirements

• Cheaper fine-tuning

• Easier experimentation

• Multiple adapters for different tasks

• Better scalability for enterprises

• Enables local fine-tuning on consumer GPUs

When Should We Use LoRA Fine-Tuning?

Best Use Cases for LoRA

• Domain adaptation

• Enterprise AI customization

• Customer support assistants

• Medical or legal AI systems

• AI coding assistants

• Instruction tuning

• Chatbot personalization

• Resource-constrained environments

• Rapid experimentation

• Multi-task adapter systems

When NOT to Use LoRA?

• Training models completely from scratch

• Cases requiring full model architecture modification

• Extremely small models where full fine-tuning is affordable

• Applications needing maximum possible model adaptation

• Situations where inference simplicity is critical without adapters

Key Components of LoRA Fine-Tuning

| Component | Role |

|---|---|

| Base Model | The pretrained frozen LLM. |

| LoRA Adapters | Trainable low-rank matrices. |

| Training Dataset | Task-specific examples. |

| Optimizer | Updates LoRA parameters. |

| Inference Engine | Runs model generation with adapters. |

| Checkpoint Storage | Saves lightweight adapter weights. |

Key Features of LoRA

| Feature | Benefit |

|---|---|

| Parameter Efficiency | Trains very few parameters. |

| Low Memory Usage | Works on smaller GPUs. |

| Fast Training | Reduces fine-tuning time. |

| Modular Adapters | Supports multiple task-specific adapters. |

| Cost Efficiency | Reduces infrastructure expenses. |

| Preserves Base Model | Original pretrained model remains unchanged. |

Real-World Examples of LoRA Fine-Tuning

Example 1: Customer Support Chatbot

Goal: Fine-tune an LLM using company support tickets and FAQs.

Workflow:

• Load a pretrained model.

• Add LoRA adapters.

• Train on customer support conversations.

• Deploy lightweight adapters.

Result: The chatbot learns company-specific terminology and workflows.

Example 2: Medical AI Assistant

Goal: Adapt a general LLM for medical question answering.

Workflow:

• Use medical datasets and clinical documents.

• Fine-tune only LoRA layers.

• Preserve the original model while improving medical responses.

Result: Lower training cost compared to full medical fine-tuning.

Example 3: AI Coding Assistant

Goal: Improve code generation for a specific programming language or framework.

Workflow:

• Train LoRA adapters using repository code examples.

• Attach adapters during inference.

• Generate framework-specific coding suggestions.

Result: Specialized coding behavior without retraining the entire model.

Practical LoRA Fine-Tuning Steps

Step 1: Install Required Libraries

pip install transformers peft accelerate datasets bitsandbytes

Step 2: Load the Base Model

from transformers import AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("mistralai/Mistral-7B")

Step 3: Configure LoRA

from peft import LoraConfig

config = LoraConfig(

r=8,

lora_alpha=32,

target_modules=["q_proj", "v_proj"],

lora_dropout=0.05

)

Step 4: Attach LoRA Adapters

from peft import get_peft_model

model = get_peft_model(model, config)

Step 5: Train the Model

trainer.train()

Step 6: Save LoRA Adapters

model.save_pretrained("./lora-adapter")

Most Common LoRA Variants

| Variant | Description | Main Advantage |

|---|---|---|

| LoRA | Standard low-rank adaptation. | Efficient fine-tuning. |

| QLoRA | Quantized LoRA training. | Even lower GPU memory usage. |

| AdaLoRA | Adaptive rank allocation. | Better parameter efficiency. |

| DoRA | Weight decomposition extension. | Improved training stability. |

LoRA vs Full Fine-Tuning

| Feature | LoRA Fine-Tuning | Full Fine-Tuning |

|---|---|---|

| Trainable Parameters | Very small subset | All model parameters |

| GPU Memory Usage | Low | Very high |

| Training Cost | Low | Expensive |

| Storage Size | Small adapter files | Full model checkpoints |

| Training Speed | Faster | Slower |

| Customization Depth | Moderate | Maximum |

| Infrastructure Complexity | Lower | Higher |

Advantages of LoRA Fine-Tuning

| Advantage | Explanation |

|---|---|

| Lower Cost | Requires less compute infrastructure. |

| Memory Efficient | Works with limited GPU memory. |

| Faster Iteration | Speeds up experimentation. |

| Easy Deployment | Adapter files are lightweight. |

| Supports Multiple Adapters | One base model can support many tasks. |

Disadvantages of LoRA Fine-Tuning

| Disadvantage | Explanation |

|---|---|

| Limited Full Adaptation | Cannot modify all model behaviors deeply. |

| Adapter Management | Multiple adapters increase operational complexity. |

| Inference Overhead | Adapters slightly increase inference complexity. |

| Dependent on Base Model | Quality depends heavily on pretrained model quality. |

| Not Ideal for Architecture Changes | Cannot redesign core model structure. |

Alternatives to LoRA

| Alternative | Description | Compared to LoRA |

|---|---|---|

| Full Fine-Tuning | Updates all model parameters. | More powerful but far more expensive. |

| Prompt Engineering | Changes prompts instead of weights. | Simpler but less specialized. |

| Prefix Tuning | Trains prompt-like prefix vectors. | Even lighter than LoRA but less flexible. |

| Adapters | Inserts trainable modules into networks. | LoRA is a more efficient adapter variant. |

| QLoRA | Quantized LoRA approach. | Lower memory usage than standard LoRA. |

Summary

LoRA fine-tuning is one of the most important parameter-efficient training methods for modern Large Language Models. It enables developers to customize powerful AI models without the enormous cost of full fine-tuning by training lightweight low-rank adapter matrices instead of updating billions of parameters. LoRA dramatically reduces GPU memory usage, training time, and deployment complexity while maintaining strong performance. Because of its efficiency and flexibility, LoRA has become a standard approach for enterprise AI customization, open source LLM adaptation, and resource-constrained AI development.